Fast contrast and naturalness preserving image recolouring for dichromats |

|

Xinyi Wang*, Zhenyang Zhu*, Xiaodiao Chen, Kentaro Go, Masahiro Toyoura, Xiaoyang Mao |

|

Abstract |

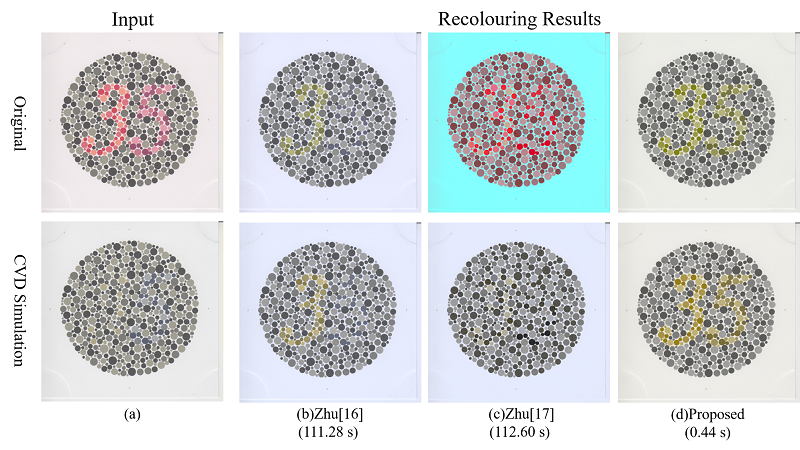

| People with colour vision deficiency (CVD) may have difficulty recognising or discriminating colours. To enhance their visual details, some methods have been developed to enhance colour contrast. Recently, re- searchers realized the importance of preserving naturalness in the processed images to avoid decreasing the quality of vision (QOV) and state-of-the-art image recolouring methods succeeded in realising both contrast enhancement and naturalness preservation by utilising optimisation model. However, the high computational cost of these methods prevents their wide application in daily lives. Moreover, these meth- ods cannot automatically resolve the trade-offbetween contrast enhancement and naturalness preserva- tion. This paper proposes Optimized Dichromacy Projection (ODP) as a novel high-speed image recolour- ing technique for dichromacy compensation. ODP achieves both contrast enhancement and naturalness preservation by computing an optimized projection from the colour gamut of normal vision to the colour gamut of dichromacy and can automatically adapt the weight of contrast enhancement and naturalness preservation to the property of individual images. Qualitative, quantitative, and subjective evaluation ex- periments show that the proposed method can generate images of qualities competitive to the state-of- the-art methods while drastically improving computation time. |

Links

|

CitationXinyi Wang, Zhenyang Zhu, Xiaodiao Chen, Kentaro Go, Masahiro Toyoura, Xiaoyang Mao, “Fast Contrast and Naturalness Preserving Image Recolouring for Dichromats,” Computers & Graphics, First online 2021-05-01. |

AcknowledgmentThis work is supported by JSPS Grants-in-Aid for Scientific Research, Japan (Grant No. 17H00738, 20J15406). The authors would like to thank all the participants for evaluating the proposed method and giving us valuable comments. |